Build AI that matters

Dependable AI systems for real-world impact

João Galego $$\left|\text{🧠}\right>$$

Head of AI @ CSW

Invited Professor @ ISEG

$ whoami

Academic Background

MSc Physics

PgDip Forensics*

PhD Cognitive Science / ABD**.png)

* Not-so-fun fact: I once performed an autopsy

** Dropped out to live life and have fun doing it

Professional Experience

Lead ML Engineer

Solutions Architect

Head of AI

TL;DR

Break things at scale

Build things faster

Make brains* go brrr

* all brain types welcome!

Agenda 📋

Mind the gap

great demos, fragile products

Why AI fails

and why models aren't the problem

Dependable AI

models $\rightarrow$ systems $\rightarrow$ society

AI that (actually) matters

building systems people can trust

PRFAQ

what you might be wondering,

but were afraid to ask

This talk was inspired by...

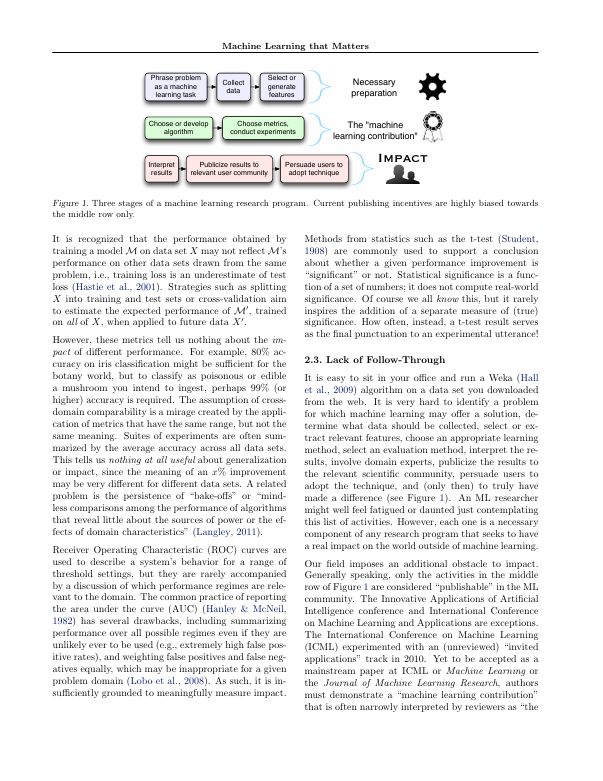

Machine Learning that matters by Kiri Wagstaff

In my first month at Critical...

a colleague pulled me aside and said

"what you do is not engineering"

My first reaction?

Offense

My second?

Denial

One year later...

I owe them an apology

They were right

This talk is my attempt

to set the record straight

Want to dive deeper?

Mind the gap

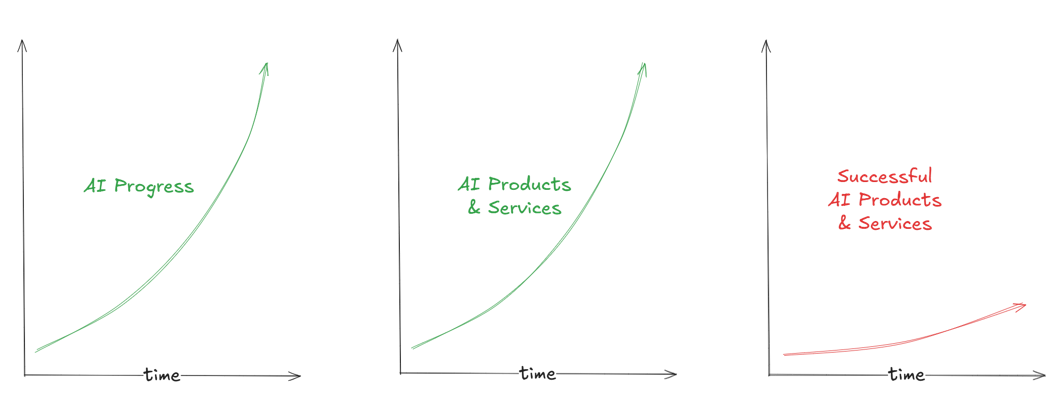

The AI revolution is accelerating...

Increased Spending

This year, global spending on AI

will reach $300B growing 4.2x faster

than average IT spend.

Widespread Adoption

34% of enterprises have deployed

AI in production and 22% will

deploy in the next 12 months.

Generative AI Impact

Generative AI will increase

the impact of all AI by 15 to 40%

across all industries.

... but reality tells

a different story

No Roadmap, No Results

When it comes to AI adoption,

64% of companies lack a clear roadmap

with measurable goals.

Spending Big, Delivering Small

67% of organizations expect

to maintain or increase AI spending, yet

only 21% report any proven outcomes.

From Prototype To Nowhere

86% of all AI projects fail to deliver,

while 50% never make it to production.

The AI production gap is real...

... and we're still getting mixed signals...

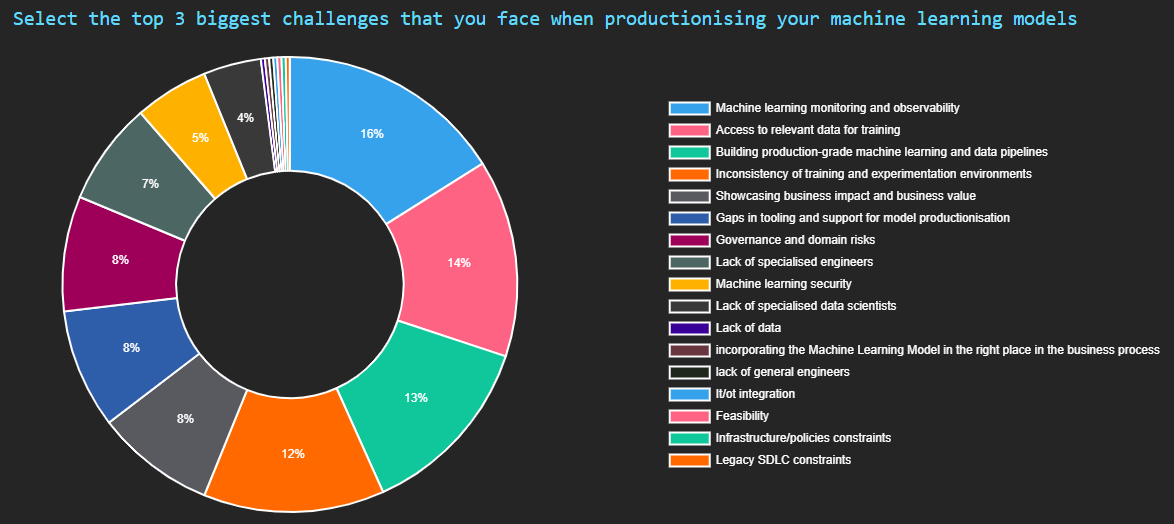

Why is it so hard

to productionize ML?

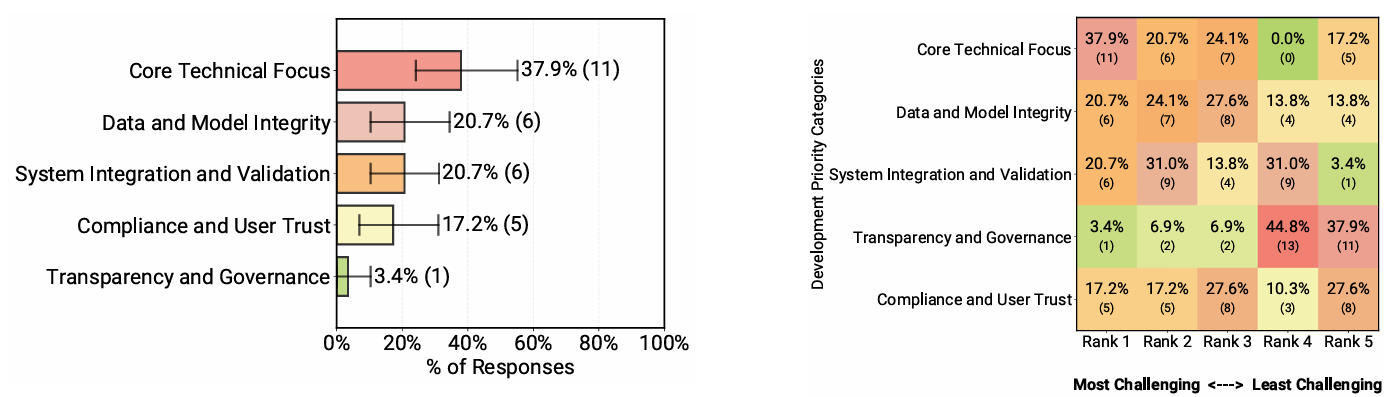

The State of Production ML in 2025

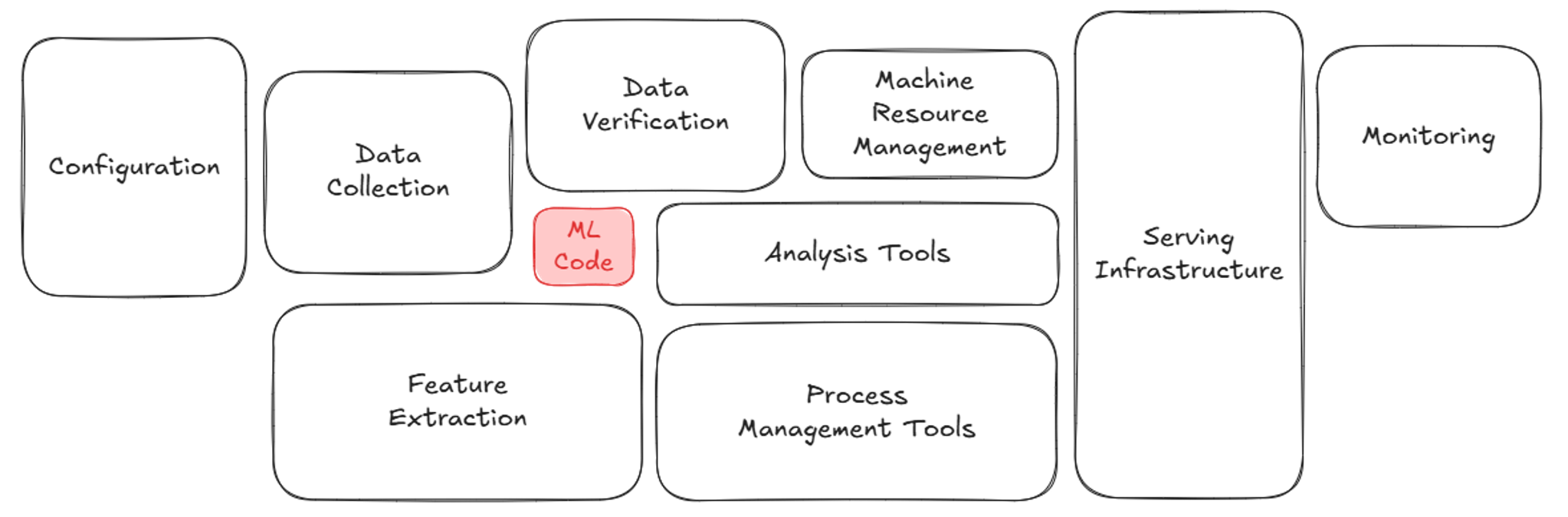

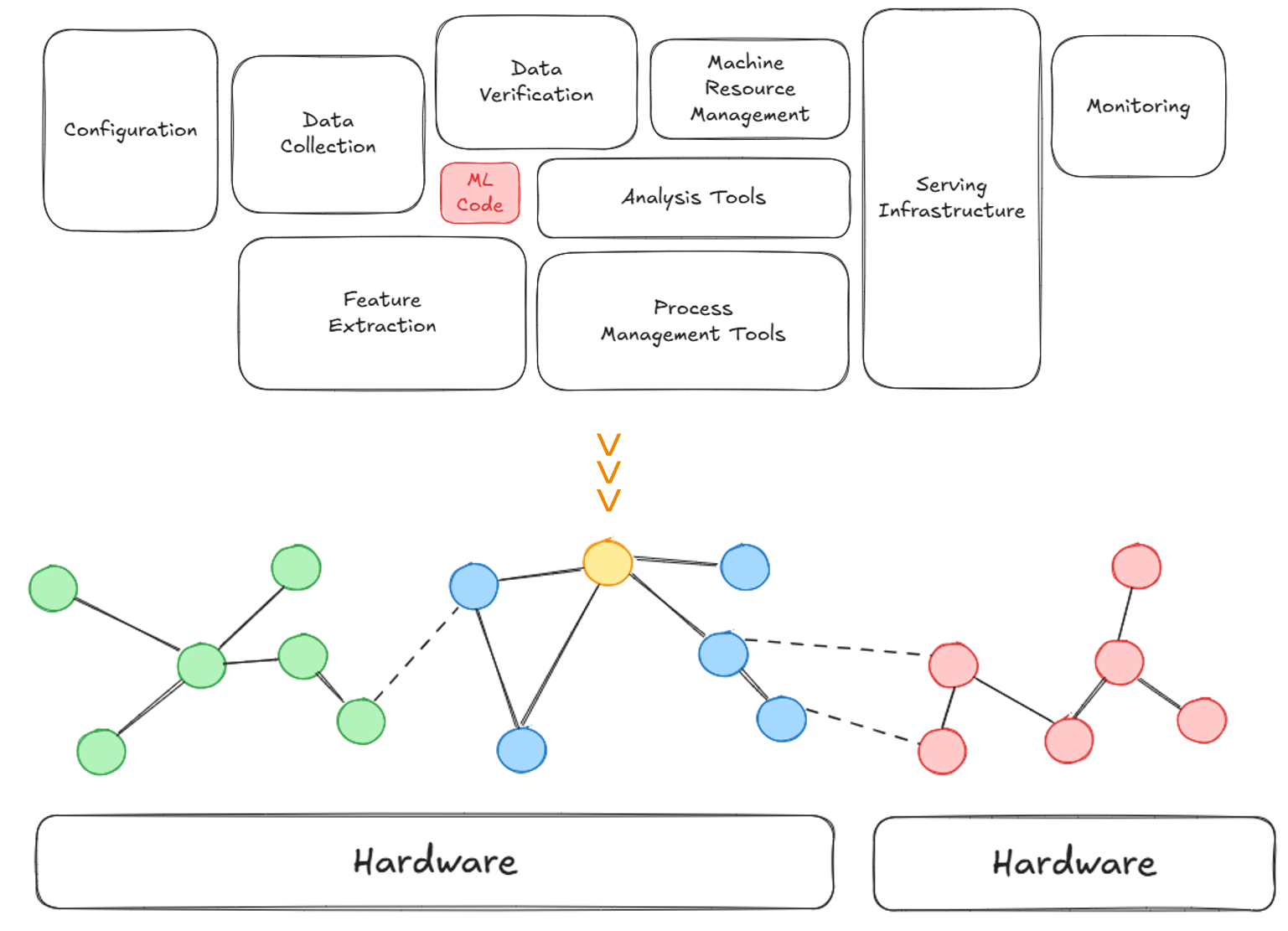

Technical debt is not so hidden anymore

Source: Adapted from Sculley et al. (2015)

In real applications, ML is just one among many components...

Why AI fails

Here's an uncomfortable truth...

At any AI conference, you'll hear about:

- better models

- bigger models

- more data

- higher scores

The Main Problem

Real-world impact isn't about intelligence.

It's about RELIABILITY.

NOT

Can we build AI?

BUT

Can we trust it when it matters?

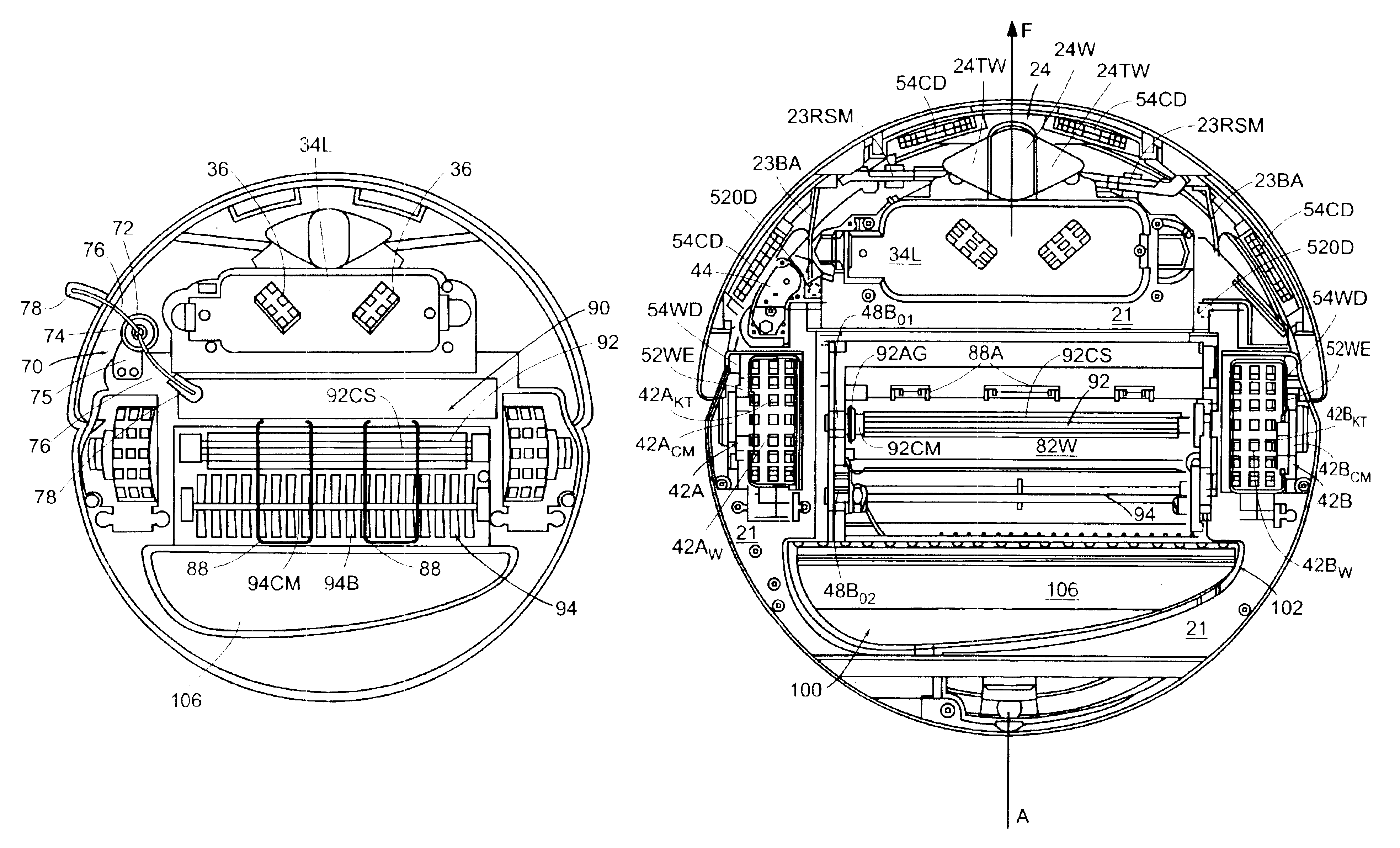

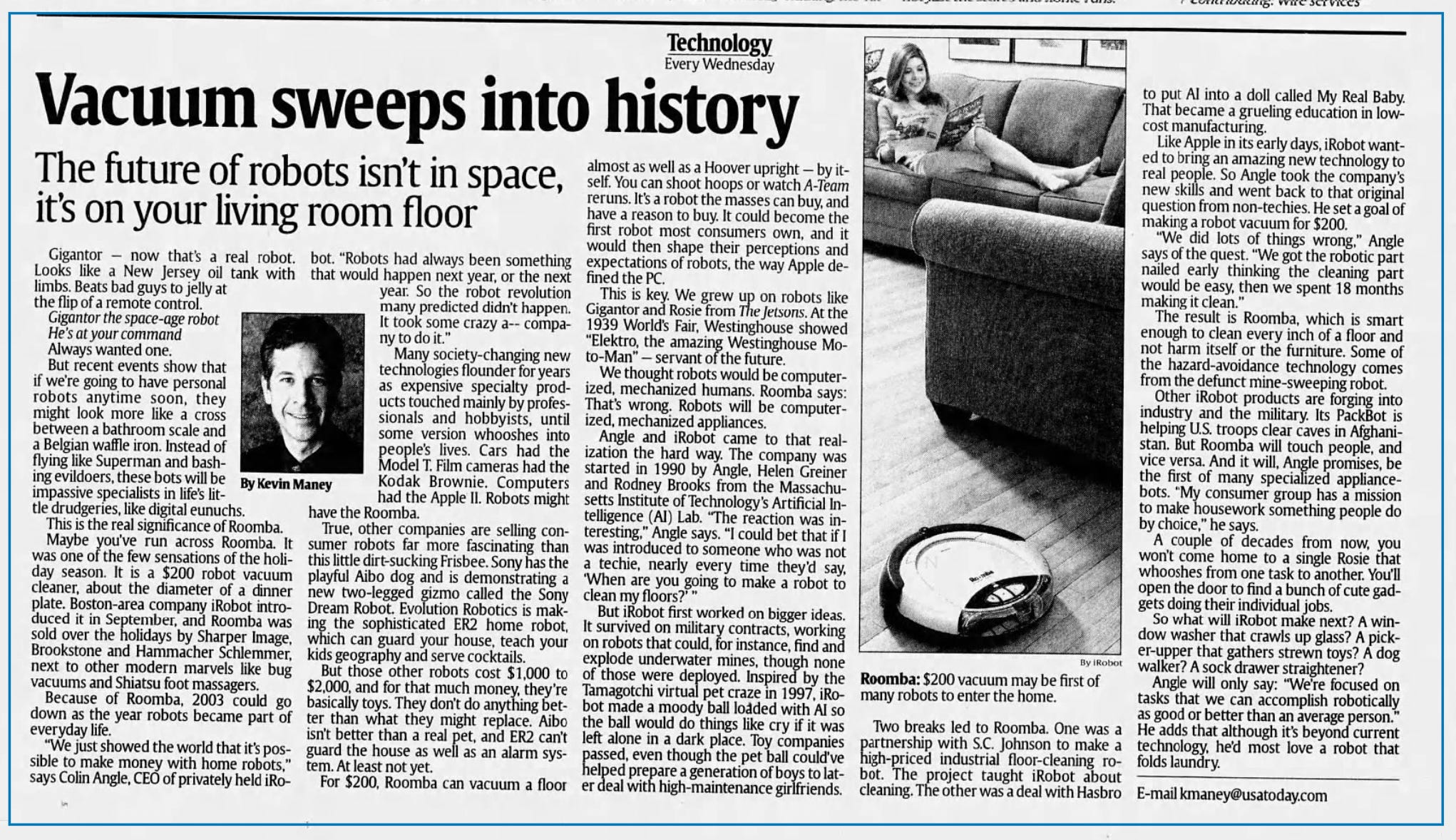

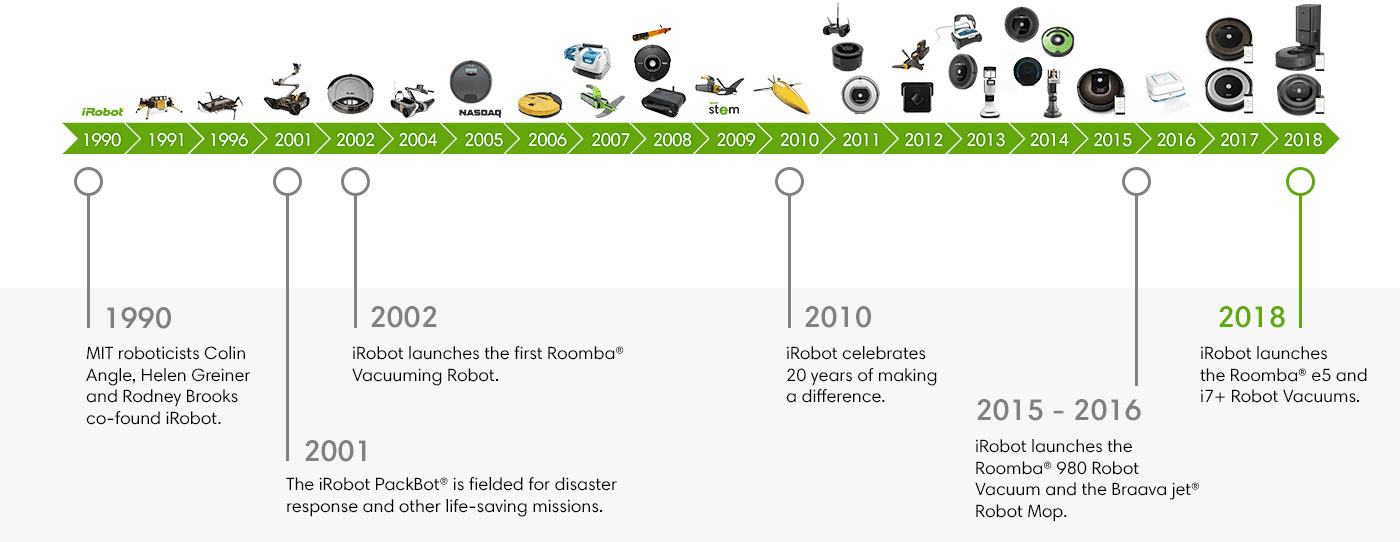

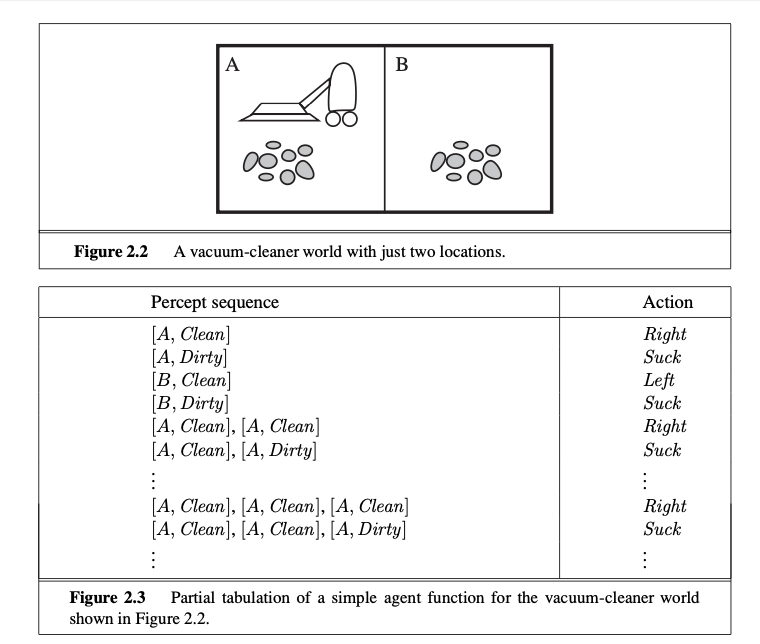

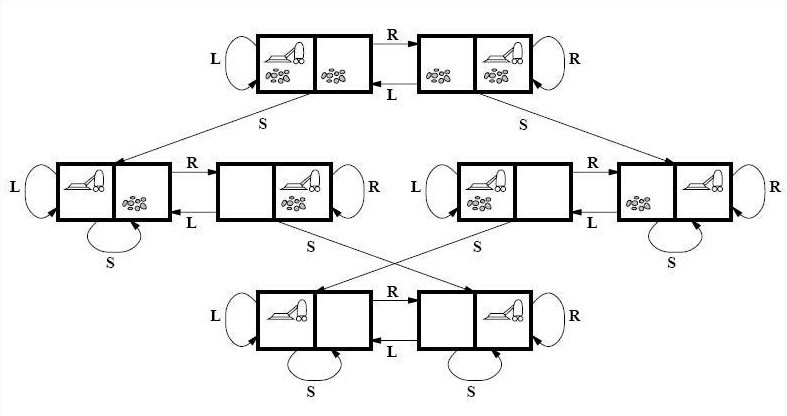

Meet US6883201B2 AKA Roomba

This little guy changed the world of robotics forever...

It has a long and storied history...

Vacuum cleaning is simple, right?

Let's play a game...

There are 3 main reasons why ML systems are removed from prod...

Who wants to take a guess?

Here are the winners...

🥉 Cost

🥈 Security

🥇 RELIABILITY

Measuring Agents in Production

Source: Pan et al. (2025)

And just when you thought it would work...

... you get this instead!

Why does this matter?

Because AI is already everywhere

that matters most

AI is saving lives in the ICU...

... making life-or-death decisions

AI is flying drones...

... and directing air traffic

AI is in space...

ESA's Φsat-2

Maritime Vessel Detection

Wildfire Detection

Autonomous in-space assembly

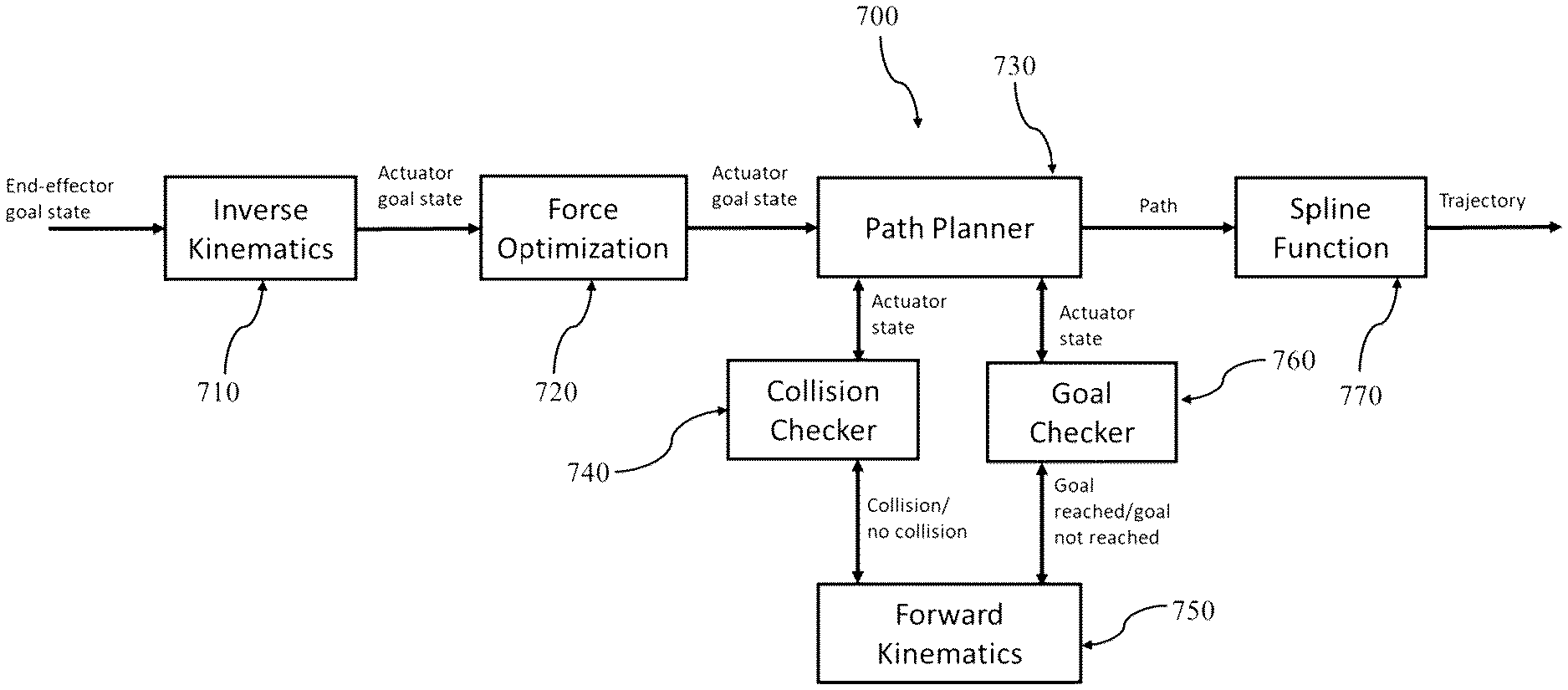

"(...) a convergence of modern control theory,

and machine learning" (Patent: US11989009B2)

Datacenters in space // Taranis

Why it's a terrible, horrible, no good idea

AI is inside nuclear reactors

The Atom and the Algorithm

Nuclear energy and AI are converging

to shape the future

AI is already improving nuclear

in many ways...

-

Operations / predictive maintenance

-

Design / reactor modelling

-

Safety / accident simulation

-

Safeguards / surveillance footage analysis

"Reassuringly, despite its brilliance, AI still needs a human to make sure it is right and impartial, and to understand the politics behind a safeguards footnote"

Nuclear at Argonne / PRO-AID

Vibe nuclear // Pivot-to-AI

What it is & why it's a bad idea

AI is in our critical services...

quietly running in the background

until something goes wrong

What is a critical system?

A system whose failure may cause

- injury or loss of life 😵

- infrastructure damage 💥

- environmental harm 🚱

- mission failure 🚀

- major financial loss 📉

When these systems fail...

real accidents happen!

Mars Climate Orbiter

Lost a spacecraft because one team

used metric and the other used imperial 📏

Patriot Missile Failure

Killed 28 soldiers due to a cumulative

rounding error in the system’s software 🎯

Knight Capital Trading Glitch

Lost $440M in 30 minutes

after deploying buggy code 💸

Toyota Unintended Acceleration

Spaghetti code broke the brakes 🚗

Good enough is not good enough

At least, not in critical systems

“Do you code with your

loved ones in mind?”

― Emily Durie-Johnson, Strategies for Developing Safety-Critical Software in C++

If the stakes are this high...

Is it really a good idea to bring AI to critical systems?

Let's take a step back...

Traditional software

It does exactly what you tell it to do...

-

Same input, same output... always

-

Rules are explicit and readable

-

Bugs have clear causes and fixes

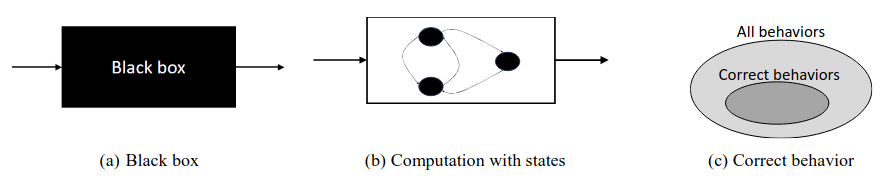

What is determinism?

Source: Andersson et al. (2024)

You write the rules

You know what it will do

You know why it broke

You are in control

Defeating Nondeterminism in LLM Inference

Source: He et al. (2025)

ML Systems

You shift the agency to data:

-

The data wrote the rules

-

Change the data, change the behavior

-

Garbage in, garbage out

You didn't write the rules

You don't always know what it will do

You don't always know why it broke

You are not in control

AI amplifies complexity...

and complexity breaks things.

S*** happens!

Models will make mistakes

Just stick something to it...

or when is a stop sign not like a stop sign?

Nissan's Emergency Braking

False positives posed traffic risks to drivers

Waymo School Bus Problem

Polite software that 'moved out of the way'

by illegal passing. 🚌

Even great models eventually fail...

often in strange and unpredictable ways

What should we do about it?

Let's turn to the ECSS ML handbook...

Golden Rule #1

Do NOT build AI

just because you have data.

Golden Rule #2

Do NOT use AI

just because you can.

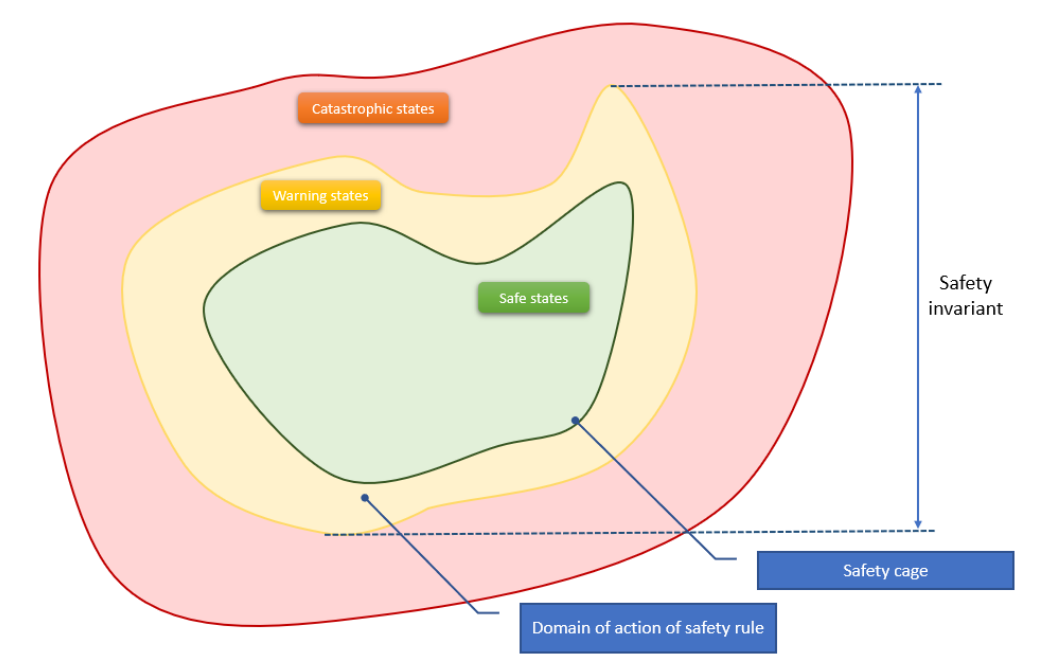

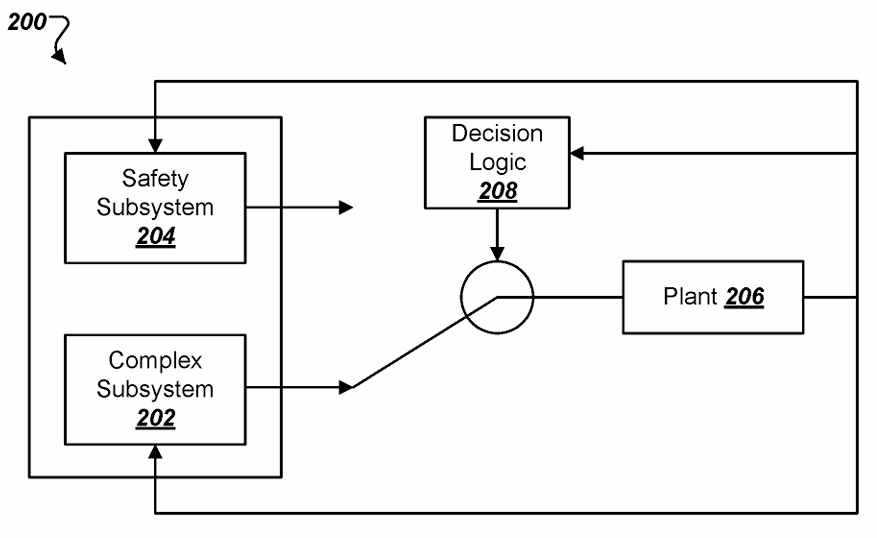

Safety Cage Architecture

Don't try to prove that ML is safe.

Instead, constrain it so it can't be unsafe.

Source: Delseny et al. (2021) / DEEL

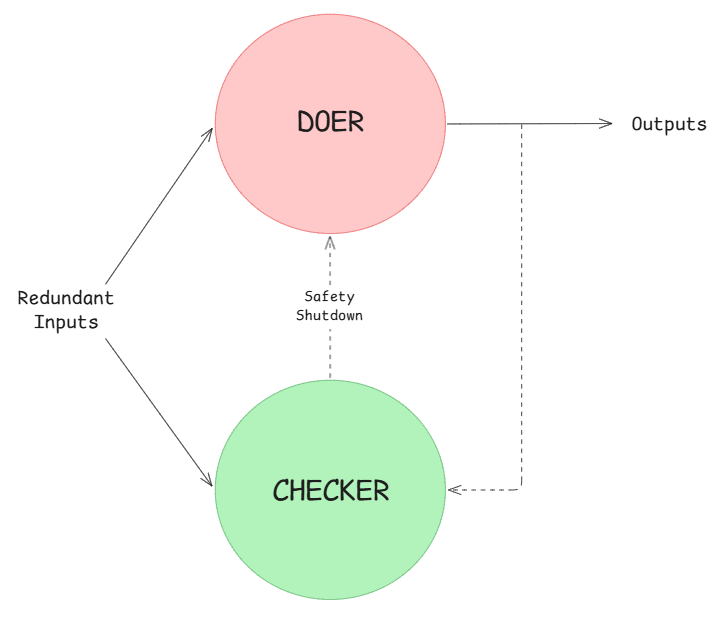

Doer / Checker Architecture

The doer optimizes for performance.

The checker handles safety.

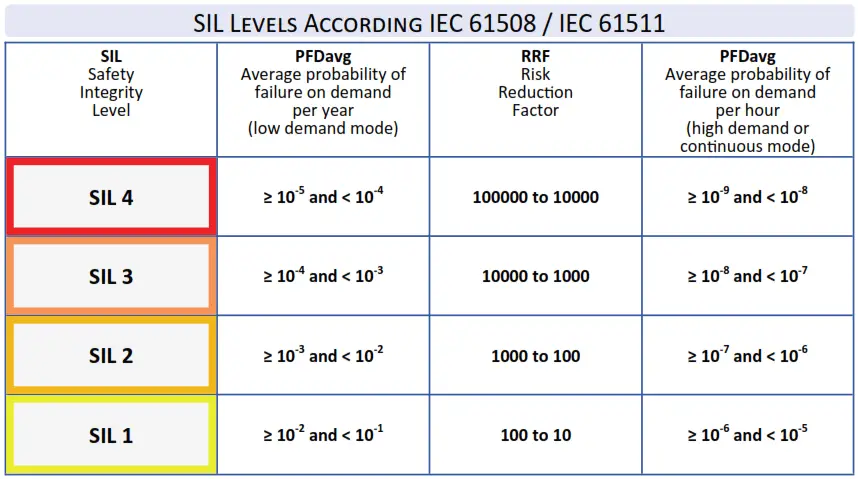

Doer/Checker > Automotive

The doer can be low SIL ⬇️

The checker must be high SIL 🚨

Automotive > ISO26262

Safety Integrity Levels (SIL)

Aerospace > DO-178C

Development Assurance Levels (DAL)

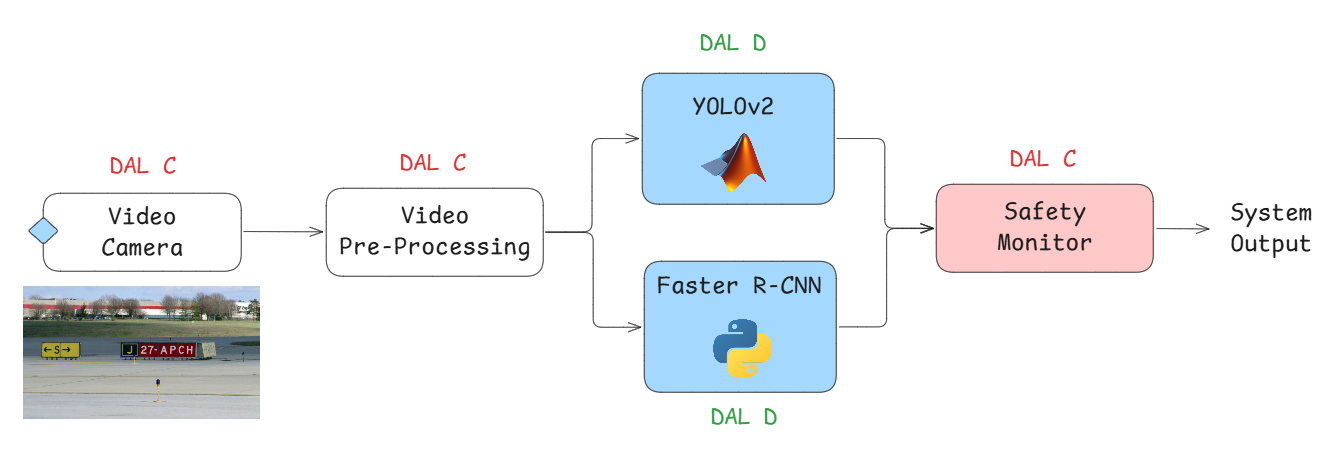

NASA on using LLMs for Assurance

Runway Sign Classifier

Is this application DAL-C or DAL-D certifiable?

Source: Adapted from Dimitriev et al. (2023)

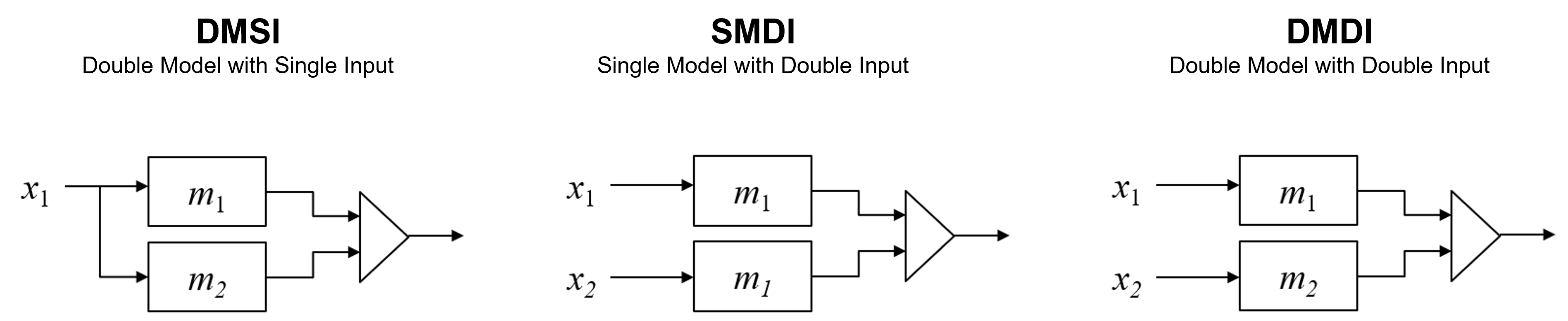

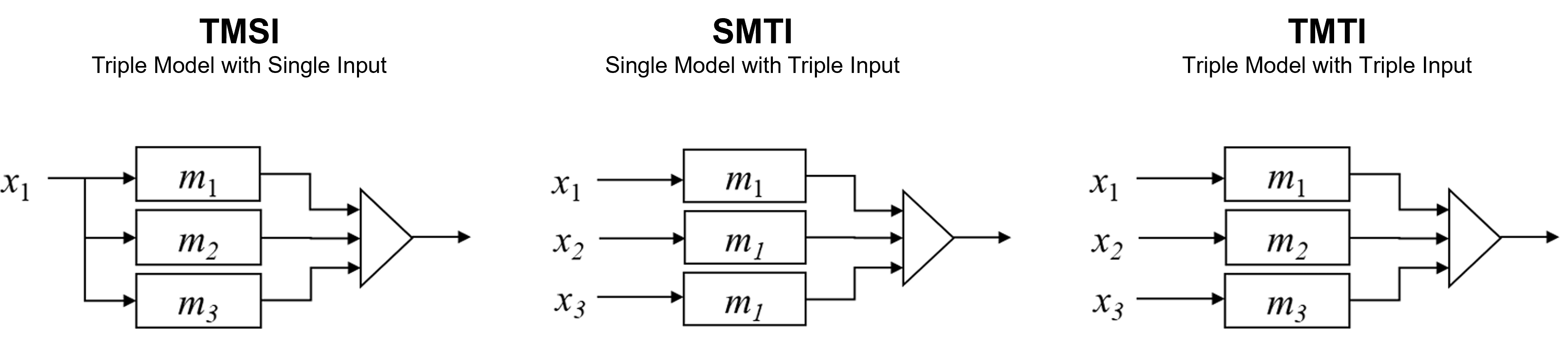

N-Version Architecture

Different versions of ML models and/or their inputs are used in a system to improve the output reliability.

2-Version Variants

Source: Adapted from Machida (2019)

3-Version Variants

Source: Adapted from Machida (2019)

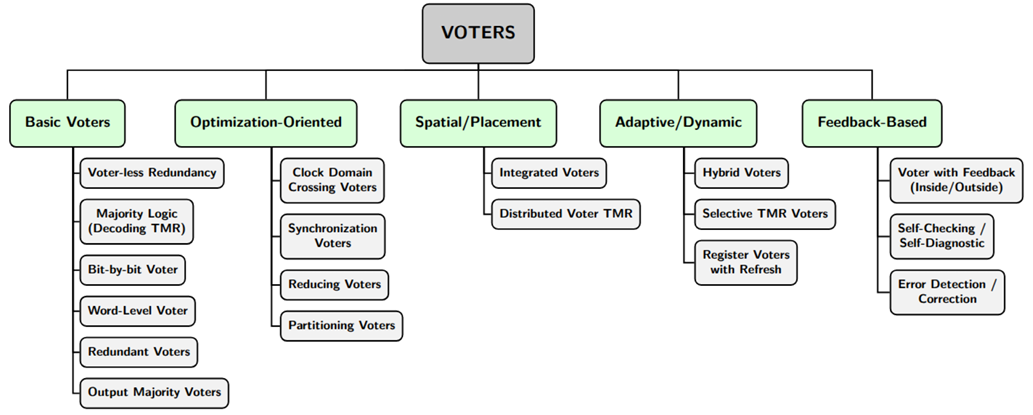

Voter Architectures

Source: Flad (2026)

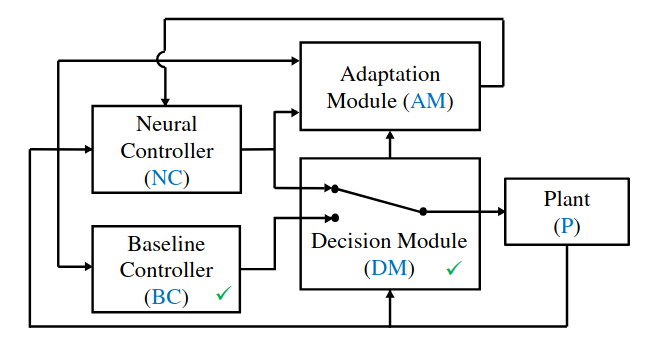

(Neural) Simplex Architecture

Source: Phan et al. (2019)

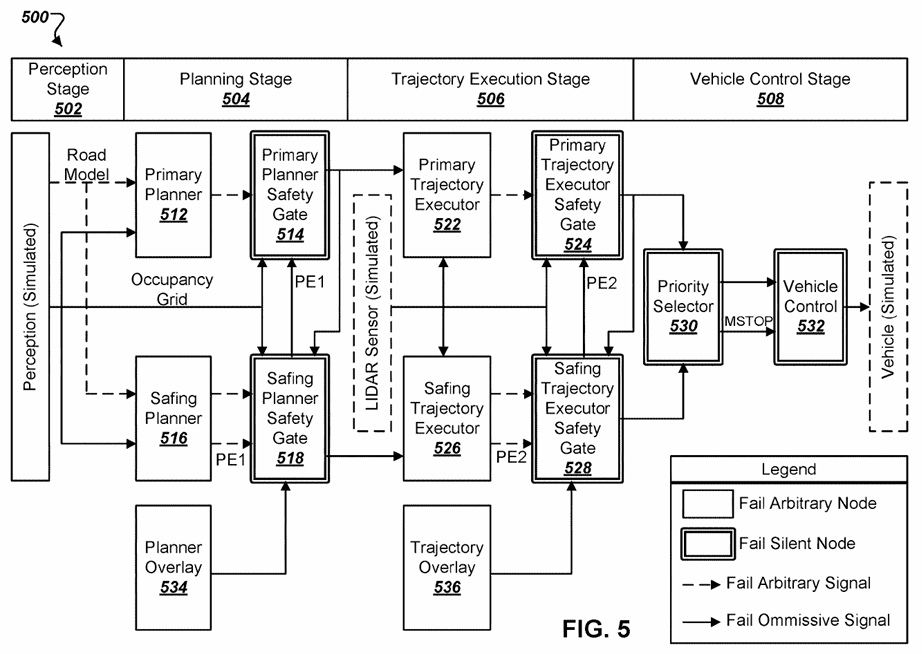

Simplex Architecture > Automotive

Patent: US10962972B2

Safety Architecture for Autonomous Vehicles

Saab / Helsing Collaboration

"While all of Helsing’s work primarily focused on software model training, integration with Gripen E APIs and testing, Saab actually set the groundwork for operating a software-defined aircraft several years ago with an overhaul to the Gripen’s avionics."

Saab's Split Avionics

Tactical vs Flight Critical

"Gripen’s avionics system separates 10% of the aircraft's flight critical management codebase from 90% of its tactical management code, resulting in avionics that are 'hardware agnostic'."

Software-Defined Assurance / Helsing

"Many of the well-known approaches used to ensure the reliability of software are difficult or impossible to apply to AI-based software, where models are created from data rather than hand-coded by software developers. This creates friction in the commissioning and development of AI-based software, because it is unclear what criteria will be used to assure it. The potential worst case is that assurance of systems involving AI are subject to a matrix of both poorly-fitting existing requirements and new but underspecified AI-related requirements."

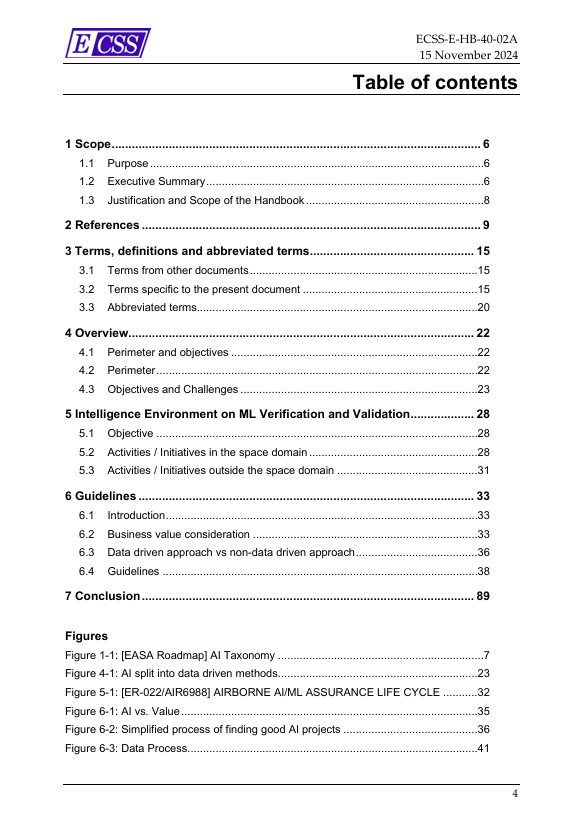

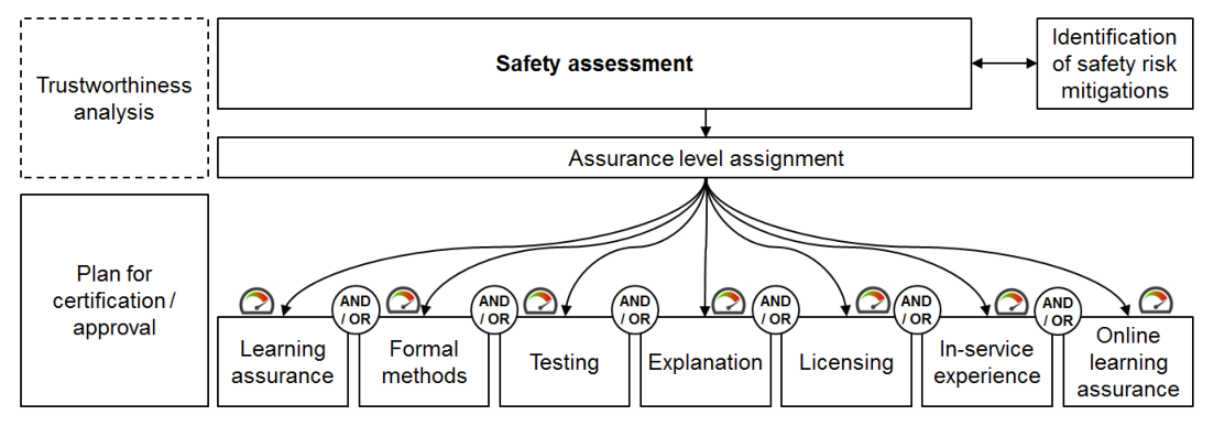

Airborne AI/ML Assurance Lifecycle

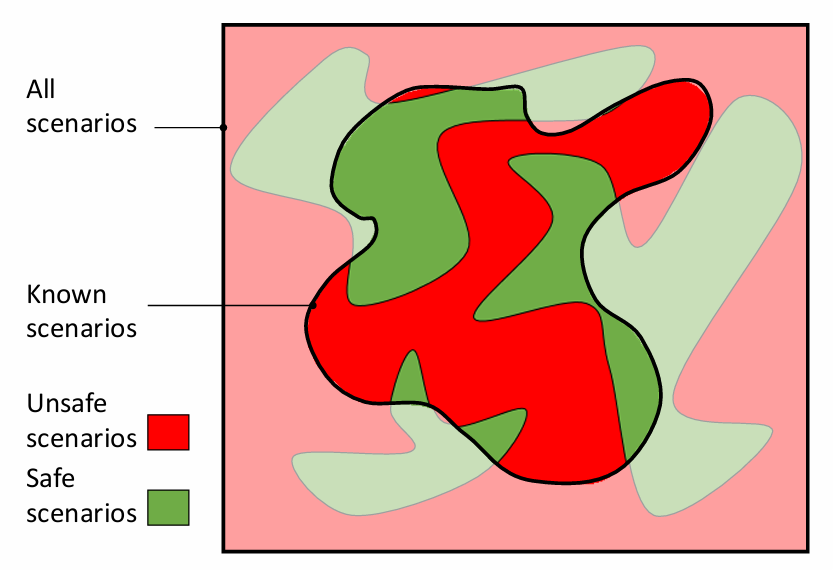

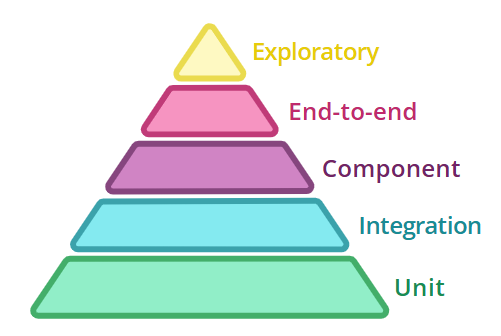

Testing

AI is part of the system.

So test it like it is.

The ECSS ML handbook suggests checking:

-

Known cases (the expected)

-

Coverage (the internals)

-

Edge cases (the unknown)

-

Adversarial cases (the hostile)

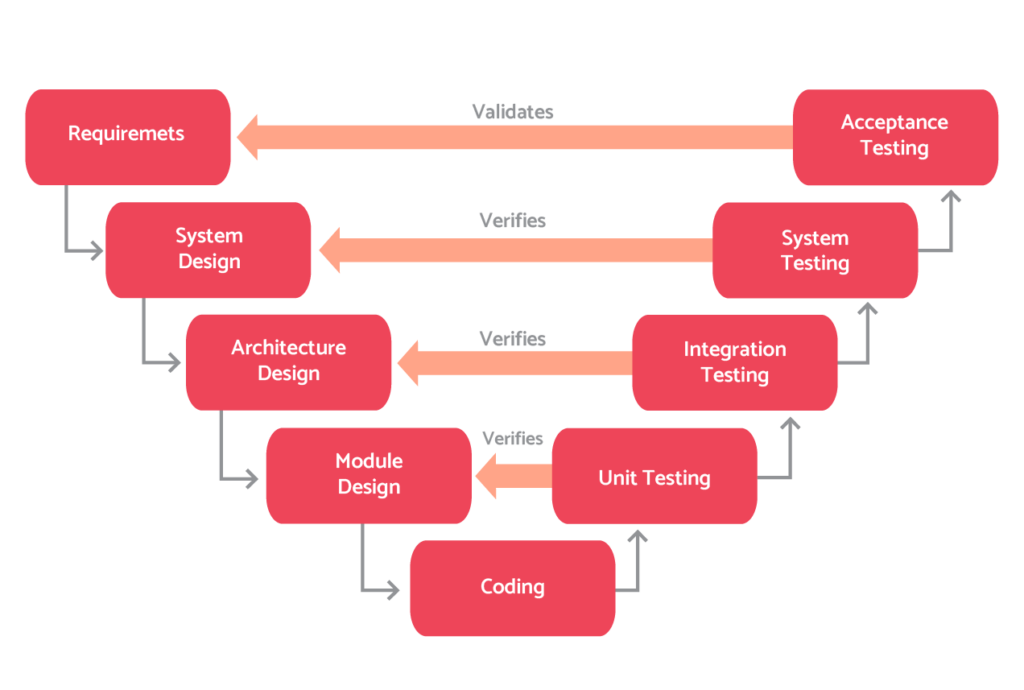

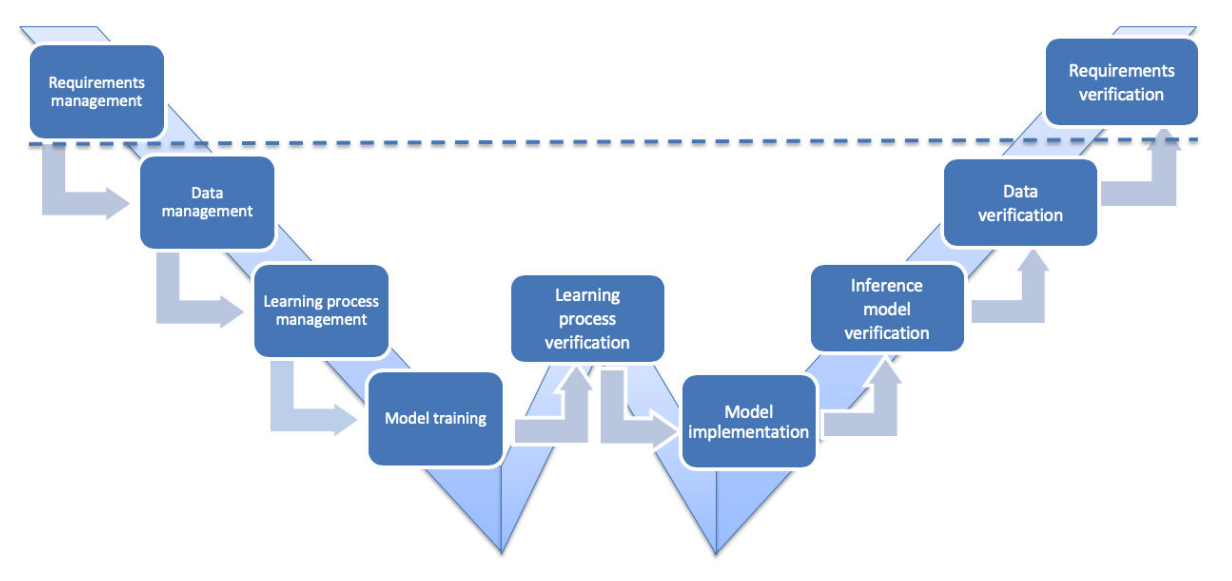

You may know about the V-Cycle...

... but what about the W-Cycle?

Source: EASA / Daedalean (2024)

Formal Verification

Mathematically prove that

certain behaviors cannot happen.

Here's a short crash course on formal methods for software verification...

* Oldie, but goodie!

Reactive System

Systems that maintain an ongoing interaction

with the environment, as opposed to computing

some final value on termination.

Concurrent programs

Embedded and process control programs

Perpetually ongoing processes

Operating systems

These systems are not

defined by what they do

but when they do it.

Time is the most important thing in engineering...

There's a saying at Google...

"Software engineering is programming integrated over time."

Winters, Manshreck & Wright (2020)

If you take this literally...

$$\texttt{SWE} = \int \texttt{Programming} ~dt$$

Then engineering is just...

$$f \mapsto \texttt{E}[f] = \int^{\min[\text{EOL}, ~+\infty]}_{\max[-\infty, ~\text{idea}]} f ~dt$$

Our Mission

Ensure that certain properties hold at all times.

Safety property

bad thing never happens

$$\square ~\neg \texttt{bad}$$

Liveness property

good thing eventually happens

$$\diamond ~\texttt{good}$$

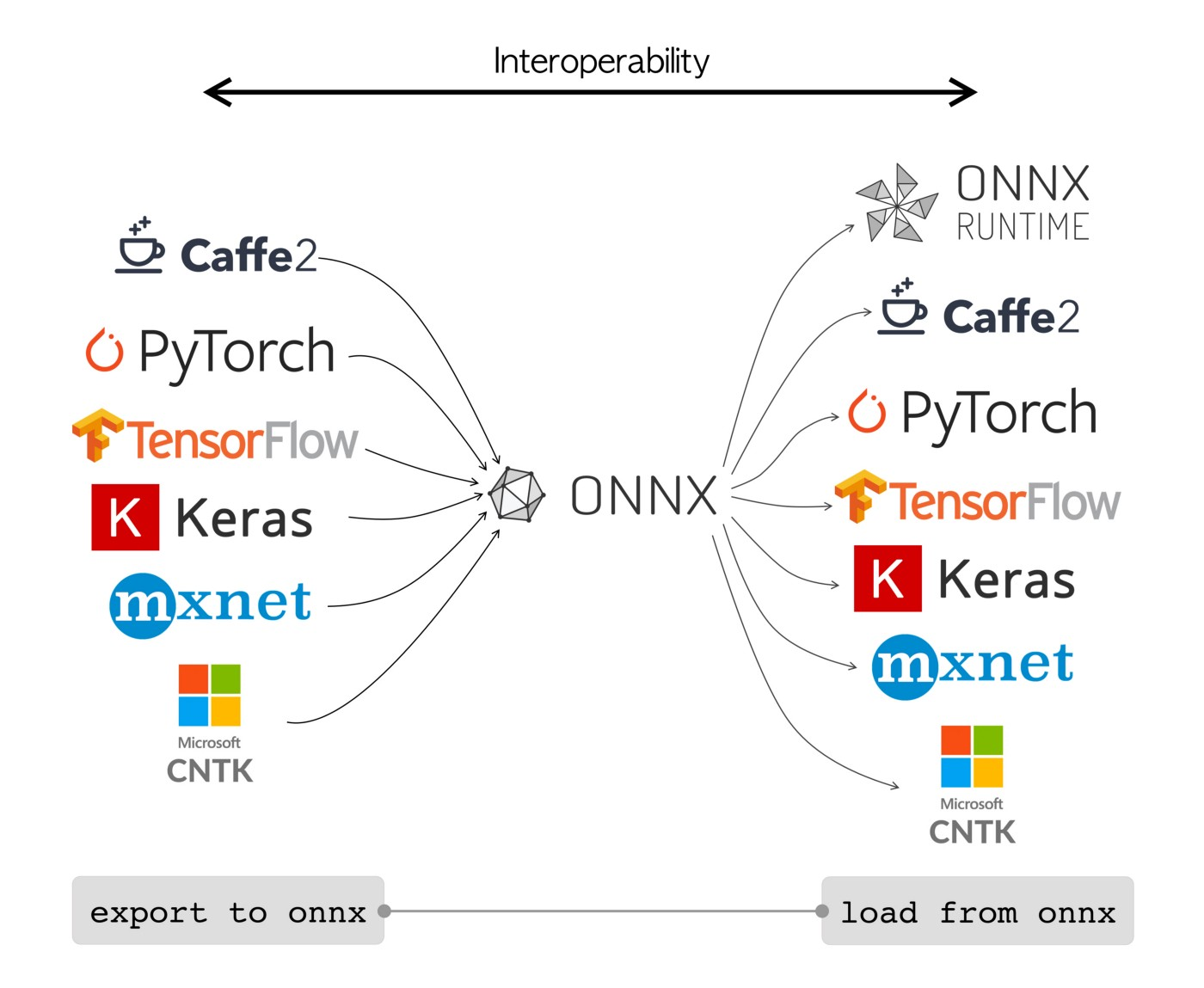

Formal Methods $\rightarrow$ AI

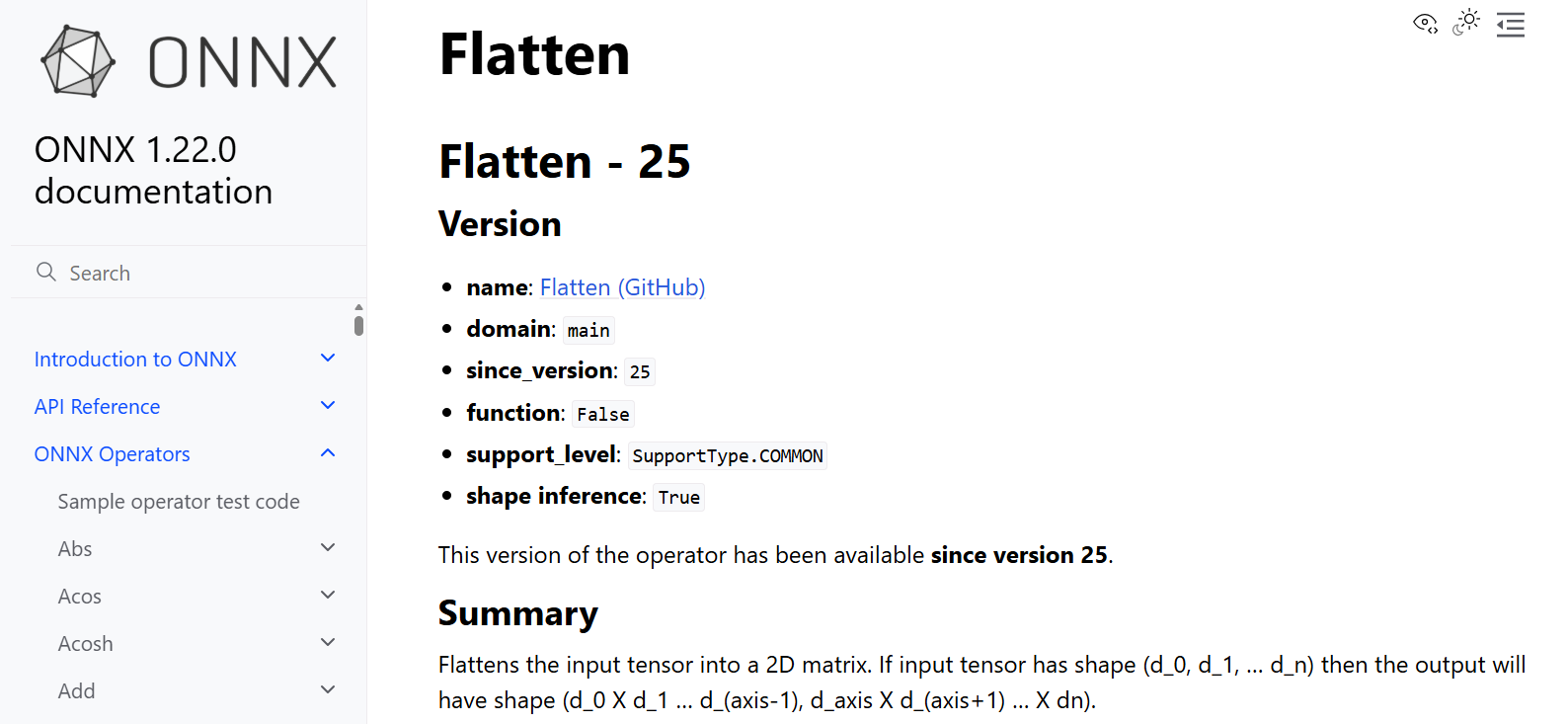

ONNX $\rightarrow$ Safe ONNX

Let's make ONNX deterministic and fully verifiable...

Docs $\rightarrow \cdots \rightarrow$ Why3

module COPFlatten

use OPFlatten

use tensor.Tensor

use list.List

use list.Length

use int.Int

use libtensor.CTensor

use libvector.CIndex

use std.Clib

use mach.int.Int32

use std.Cfloat

let cflatten (x r : ctensor) (axis: int32)

requires { valid_tensor x }

requires { valid_tensor r }

requires { r.t_rank = 2 }

requires { let axis_normalized = normalize_axis (to_int axis) (length (tensor x).dims) in

(ivector r.t_dims r.t_rank) = flat_dims (tensor x) axis_normalized }

requires { vdim x.t_dims x.t_rank = vdim r.t_dims r.t_rank }

requires { -length (tensor x).dims <= (to_int axis) <= length (tensor x).dims }

ensures { tensor r = flatten (tensor x) (to_int axis) }

=

let m = cdim_size r.t_dims r.t_rank in

for i = 0 to m - 1 do

invariant { forall k. 0 <= k < i -> value_at r.t_data k = value_at x.t_data k }

r.t_data[i] <- x.t_data[i]

done;

assert { tensor r == flatten (tensor x) (to_int axis) }

end

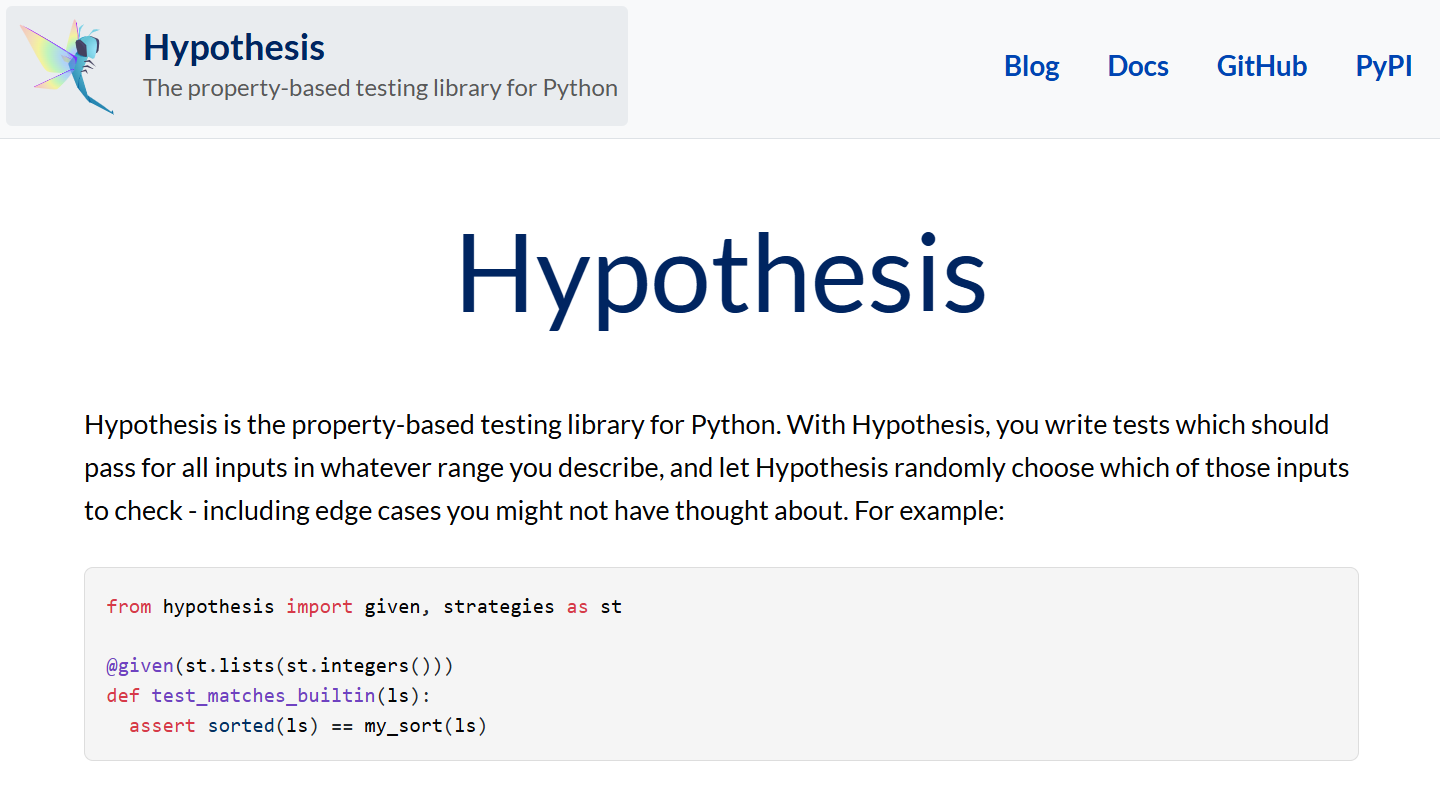

Property-Based Testing / Hypothesis

Why3 $\rightarrow \cdots \rightarrow$ C

void cflatten(struct ctensor x, struct ctensor r, int32_t axis) {

int32_t m, i, o;

m = cdim_size(r.t_dims, r.t_rank);

o = m - 1;

if (0 <= o) {

for (i = 0; ; ++i) {

r.t_data[i] = x.t_data[i];

if (i == o) {

break;

}

}

}

}

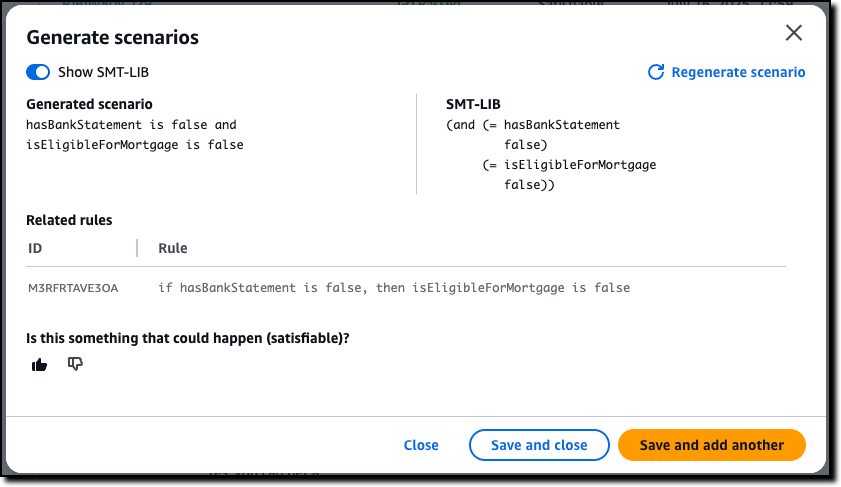

AI $\rightarrow$ Formal Methods

Natural Language

$\downarrow$

Temporal Logic Formulas

Minimize Hallucinations

with Automated Reasoning

When AI writes most of software...

who verifies it?

Most people think of verification as a cost, a tax on development, justified only for safety-critical systems. That framing is outdated. When AI can generate verified software as easily as unverified software, verification is no longer a cost. It is a catalyst.

― Leonardo de Moura, creator of Lean

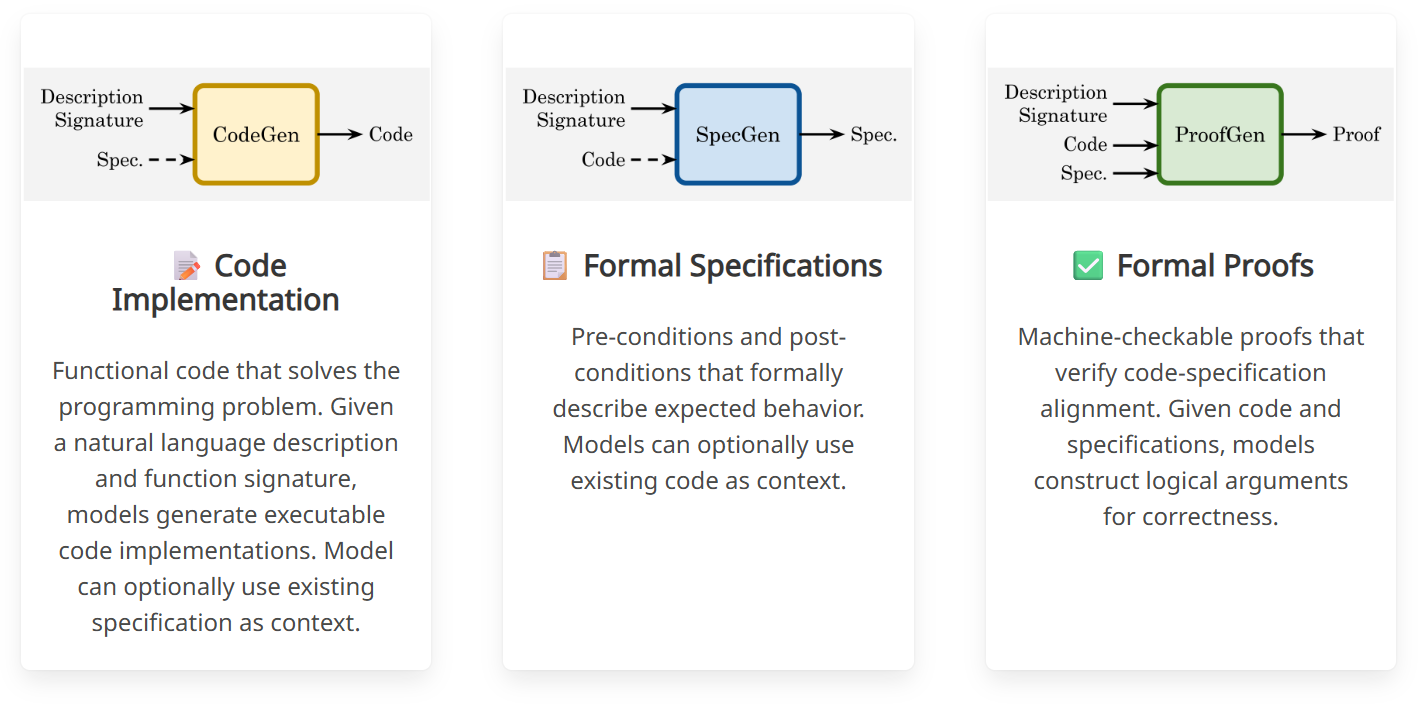

Verina: Benchmarking Verifiable Code Generation

Write the code

-- Natural language description of the coding problem

-- Remove an element from a given array of integers at a specified index...

-- Code implementation

def removeElement (s : Array Int) (k : Nat) (h_precond : removeElement_pre s k) : Array Int :=

s.eraseIdx! k

-- Pre-condition

def removeElement_pre (s : Array Int) (k : Nat) : Prop :=

k < s.size -- the index must be smaller than the array size

-- Post-condition

def removeElement_post (s : Array Int) (k : Nat) (result: Array Int)

(h_precond : removeElement_pre s k) : Prop :=

result.size = s.size - 1 ∧ -- Only one element is removed

(∀ i, i < k → result[i]! = s[i]!) ∧ -- Elements before index k remain unchanged

(∀ i, i < result.size → i ≥ k → result[i]! = s[i + 1]!) -- Elements after are shifted

Prove the code

-- Formal proof (establishing code-specification alignment)

theorem removeElement_spec (s: Array Int) (k: Nat) (h_precond : removeElement_pre s k) :

removeElement_post s k (removeElement s k h_precond) h_precond := by

unfold removeElement removeElement_postcond

unfold removeElement_precond at h_precond

simp_all

unfold Array.eraseIdx!

simp [h_precond]

constructor

· intro i hi

have hi' : i < s.size - 1 := by

have hk := Nat.le_sub_one_of_lt h_precond

exact Nat.lt_of_lt_of_le hi hk

rw [Array.getElem!_eq_getD, Array.getElem!_eq_getD]

unfold Array.getD

simp [hi', Nat.lt_trans hi h_precond, Array.getElem_eraseIdx, hi]

· intro i hi hi'

rw [Array.getElem!_eq_getD, Array.getElem!_eq_getD]

unfold Array.getD

have hi'' : i + 1 < s.size := by exact Nat.add_lt_of_lt_sub hi

simp [hi, hi'']

have : ¬ i < k := by simp [hi']

simp [Array.getElem_eraseIdx, this]

Test the code

-- Positive test with valid inputs and output

(s : #[1, 2, 3, 4, 5]) (k : 2) (result : #[1, 2, 4, 5])

-- Negative test: inputs violate the pre-condition at Line 12

(s : #[1, 2, 3, 4, 5]) (k : 5)

-- Negative test: output violates the first clause of the post-condition

(s : #[1, 2, 3, 4, 5]) (k : 2) (result : #[1, 2, 4])

-- Negative test: output violates the second clause of the post-condition at Line 17

(s : #[1, 2, 3, 4, 5]) (k : 2) (result : #[2, 2, 4, 5])

-- Negative test: output violates the third clause of the post-condition at Line 18

(s : #[1, 2, 3, 4, 5]) (k : 2) (result : #[1, 2, 4, 4])

🔴 Breakpoint

And now for a word from our sponsors!

Dependable AI

Intelligent or not...

Building systems that last is

HARD

When it comes to AI...

The real challenge isn't model accuracy.

It's system reliability under UNCERTAINTY.

Typical ML focuses on

But the model is only the beginning

We need to move from models to systems!

This thread reveals 3 things:

- Engineers don't know their history

- Tool creators have massive egos

- The importance of modelling the model

Dependable AI Mindset

-

Expect failure

-

Design for recovery

-

Monitor everything

-

Keep humans around

Engineering Best Practices

Because good intentions are not enough!

Data

Garbage in, garbage out

AI systems learn from data

If the data is wrong, incomplete, or drifting,

the system will fail.

Your model is only as good as your data

Focus on:

- data validation

- dataset versioning

- distribution monitoring

- label quality checks

You don’t control your model

your data does

Model

Accuracy isn't reliability

A high benchmark score does not guarantee

safe real-world behavior

Good numbers are not enough

Evaluate for:

- robustness

- edge cases

- distribution shift

- calibration

Test the failure modes

not just the average case.

Observability

If you can’t see it, you can’t trust it.

Watch everything, don't fly blind

Track:

- data drift

- prediction drift

- system health

- anomaly signals

Dogs not barking?

Silent failures are the most dangerous failures.

Guardrails

Expect failure. Design for safety.

Models will eventually fail.

Systems must handle that safely.

Build the safety net

Common patterns:

- confidence thresholds

- fallback logic

- human escalation

- policy checks

Reliable systems don't fail silently...

They fail gracefully.

Humans

AI works best when we are around

What machines can't replace (yet!)

Humans provide:

- context

- judgment

- accountability

Design systems that allow:

- review

- intervention

- override

# Predict: AI takes a shot...

result, confidence = model.predict(input_data)

# Check: Too unsure? Don't guess!

if confidence < threshold:

result = route_to_fallback() or route_to_human()

# Log: Always leave a trail

log_decision(input_data, result)

Human in the loop

AI acts only when a

human approves each decision.

Human on the loop

AI acts autonomously, but humans

monitor and can intervene.

Human over the loop

AI operates independently, while humans

set goals and review outcomes.

"Quis custodiet ipsos custodes?"

Who will watch the watchmen?

Humans are not the weakness.

We are part of the safety system.

Dependability is not a feature

It's engineering discipline.

AI that (actually) matters

AI where it matters most

NOT

Build smarter AI

BUT

Build trustworthy systems that safely amplify our capabilities.

AI needs to pivot

model accuracy $\rightarrow$ system reliability

benchmarks $\rightarrow$ real-world impact

research $\rightarrow$ engineering

Engineering is about solving

real problems for real people

Engineering does not stop at it works

it begins at it lasts

Build AI that matters

AI first, human always!

PRFAQ

LISBON – (Mar 2026)

A new talk titled 'Build AI That Matters'

introduces a practical framework for designing

dependable AI systems that deliver

real-world impact.

Why isn't model accuracy enough?

Production failures rarely originate

from the model itself.

$$\cdots$$

Dependability requires addressing

the entire system.

Doesn't adding reliability slow innovation?

No, it makes deployments sustainable.

$$\cdots$$

Without it, repeated failures erode trust

and slow adoption.

What role do humans play?

Humans are not replaced by AI.

$$\cdots$$

They are part of the system that ensures

safety and accountability.

What is the key takeaway?

AI creates value only when it is

reliable enough to be trusted.

$$\cdots$$

The future of AI will be shaped

not just by better models, but by better

engineering of the systems around them.

Thank you!

🙏